Brain training

AI triumphalism and (I hope) countertrolling

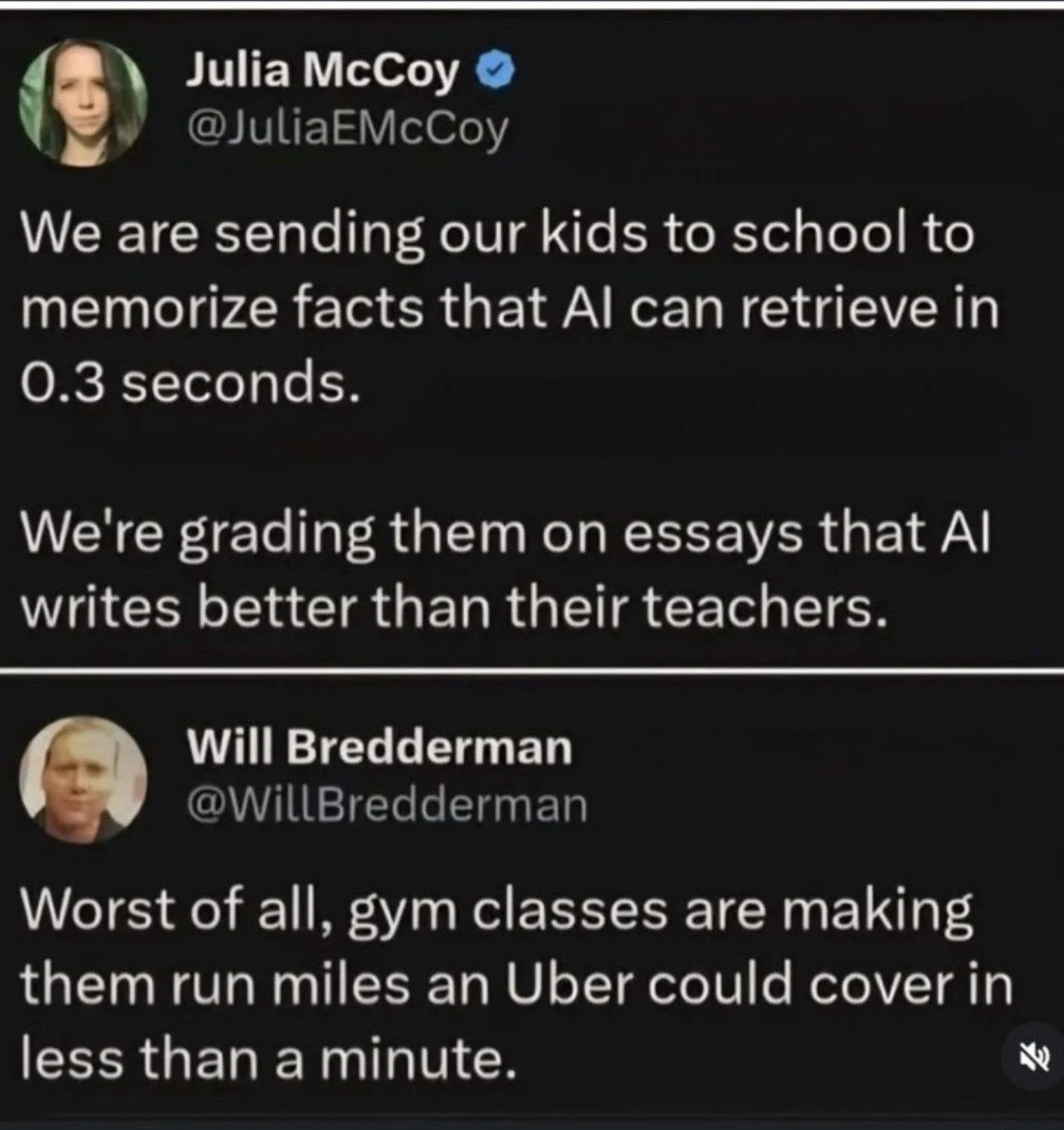

The above exchange went slightly viral recently, and I had a chuckle at the trolling response. More to the point, I’m not sure what the original poster was trying to say, even if we accept her (questionable) claims. She shows a triumphalism about AI that I think isn’t at all warranted, but if you zoom way, way out the basic contours of her point are important. AI is genuinely challenging the purpose and practice of education. I don’t have the answers, but I think it’s useful to consider the question in a bit more detail. What is education for, how does AI interfere with that, and how might we respond?

This reminded me of a very interesting conversation I had with one of my cousins last summer. I was a nerd at every stage of my education, so I never really thought about why I was in school, apart from vague notions of “being a good citizen” or “the disinterested pursuit of knowledge” or whatnot. I meant what I said. Nerd. And I was an English major, so the practical consequences of my education were always hazy. But my cousin pointed out that standardized education was set up to train workers for the 20th century economy. It trained not only skills but a certain mindset and way of communicating. The drills we did were to train those attributes. Now AI is forcing a reevaluation because unlike previous technologies it really can replace a lot of the mental effort required to produce a given output like an essay, and also because its capabilities are changing so quickly.

So it’s worth asking ourselves: is education primarily about giving you a set of skills (preparing you to earn) or is it more like optimizing your potential (equipping you to do things you want to do)? To put it another way, is it for skills or health? Employability or personal development? AI undercuts both those efforts, though in different ways.

On the employability front, a highly capable AI will obviously lead to some degree of job losses; less-capable models already have. But the more insidious point is that it’s currently impossible to say what AI will be able to do in 6 months, or 6 years, or 20 years. So it’s impossible to say which human skills will be most employable. As a parent of two school-age kids I find this is a very pressing question. I have some guesses, but it’s hard to build an educational curriculum around that.

But of course filling an economic need not the only dimension of the story—or at least God help us if it is. As the gym class troll points out above, it’s still completely worthwhile to learn and practice things that machines can do better than humans. Such abilities can be important on their own terms.

My intellectual man-crush John Milton wrote a tract about education in which he described the purpose thusly: to equip “a man to perform justly, skillfully and magnanimously all the offices both private and public of Peace and War.” He did mean “man” in the exclusive sense, but let’s gloss over that for now. Because he did mean “all the offices” too: from languages and grammar to mathematics and navigation, to medicine and engineering, to combat and ethics. This would be an intense 9-year course of reading, instruction and practice, and at the end of it the student would be fit for any and every purpose, whether that was employment, theological vocation, artistic production or, say, overthrowing and reforming an oppressive monarchical system of government. Milton saw education as the process of shaping virtuous, capable citizens, and I think that we have to get back to some version of that model, though ideally less misogynistic and exclusively Christian. Education can develop a skillset and mindset that helps a person thrive even if they don’t slot neatly into a particular job. Of course, having a job is still important, but the lesson of AI thus far seems to be that training with employability as the end goal is a losing proposition. The requirements will likely change along the way.

To get back to the post above, the point of having kids memorize facts is not to have a reliable repository of facts; it’s to give the kids some framework for how the world works and how the various pieces fit together. You can’t even intelligently look things up—or judge the validity of what you find—if you have no idea of the context for your question. Similarly, the point of a teacher setting an essay is not to accumulate texts of a certain quality. The point is to help the student be able to think and write. I think that distinction between method and aim is one that students will have to learn too, because until quite recently you generally had to do the work to get the results, and if you did the work you almost couldn’t help gaining some skill. Now AI can deliver skill-free results, and I suspect one of the major intellectual and ethical challenges of AI technology will be this demand on its users to either embrace or avoid its corrosive effects on the very skills they most value. All this has shed some additional light on an instinctual reaction I described in one of my first posts here: I’m not using AI tools in my own writing, and have no plans to. *

* I came across this quote in an article by Albert Burneko, who echoes how I feel about the subject and puts it much more evocatively: “Using an AI chatbot to help me write would be like using the kitchen garbage disposal to help me eat dinner. The garbage disposal absolutely can do something like chewing and swallowing the food, faster and more efficiently than my jaws, tongue, teeth, and throat can do it. But if I have the garbage disposal do the chewing and swallowing for me, then I get none of the flavor or nutrition or satisfaction of eating food. I'm just making a big noisy show of wasting something I need.”